Enterprise data integration platforms connect the CRMs, ERPs, databases, and SaaS applications that run day-to-day operations. When these systems fall out of sync, sales teams quote from outdated pricing, warehouse staff ship against stale inventory counts, and finance reconciles conflicting records manually. The cost of poor data integration is not theoretical. Retail inventory distortion from synchronization failures costs an estimated $1.77 trillion annually, and 100ms of added latency can reduce conversion rates by 1%.

The challenge for data and engineering leaders is choosing the right integration architecture. Enterprise ETL and ELT platforms are optimized for analytics pipelines that move data one-way into warehouses. iPaaS tools connect SaaS applications through workflow automation. Bi-directional sync platforms keep operational systems in lockstep in real time. Each category solves a different problem, and selecting the wrong one creates more friction than it eliminates.

This comparison chart evaluates the leading enterprise data integration tools across architecture, scalability, reliability, connector ecosystems, security certifications, and licensing models to help technical leaders match each platform to its strongest use case.

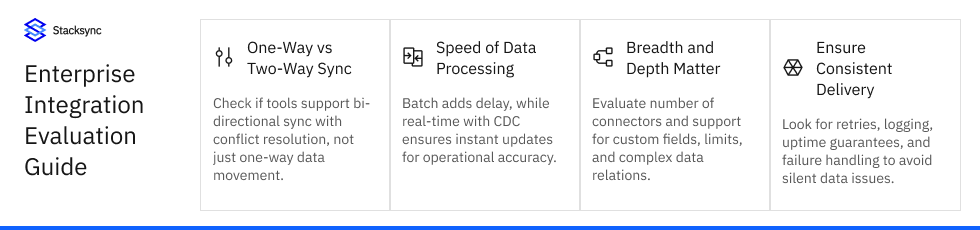

A meaningful data platform integration capabilities comparison requires evaluating seven dimensions that directly affect production reliability and total cost of ownership.

Does the platform support unidirectional sync (source to warehouse) or true bi-directional sync that keeps operational systems consistent? Most enterprise ETL and ELT platforms move data in one direction. Bi-directional ETL and ELT tools with connectors for CRM, ERP, and database systems require conflict resolution logic to prevent data corruption when changes originate from multiple systems simultaneously.

Batch processing runs on schedules (hourly, daily) and introduces inherent latency. Real-time event-driven processing uses change data capture (CDC) to propagate field-level updates within milliseconds. The choice depends on whether downstream systems tolerate stale data. Analytics pipelines typically accept batch latency. Operational systems that drive order fulfillment, invoicing, or customer-facing workflows require real-time processing.

Evaluate both breadth (number of connectors) and depth (support for custom objects, custom fields, and complex record associations). Data platforms with business-specific connectors that are scalable handle edge cases like NetSuite SuiteCloud concurrency limits, Salesforce governor limits, and Shopify rate-limiting buckets without requiring manual workarounds.

Options range from SQL-based transformation engines in ELT platforms to visual no-code mappers in iPaaS tools. End-to-end data integration tools for enterprise environments need to handle schema differences between systems automatically, including data type conversion, field name mapping, and record association sequencing.

Data integration platform reliability separates production-grade tools from prototyping solutions. Evaluate guaranteed data delivery, automated retry logic, dead-letter queues for failed records, detailed audit logging, and data integration platforms uptime guarantees. Silent failures in integration pipelines compound into data drift that takes weeks to reconcile.

The platform must scale from thousands to billions of records without manual infrastructure changes or performance degradation. Data integration platforms scalability comparison should test behavior under peak loads: month-end closes, Black Friday traffic spikes, and bulk data migrations. Scalable ELT platforms handle this through cloud-native autoscaling, while operational sync platforms need sustained throughput at consistent low latency.

Enterprise data integration architecture solutions must meet strict security standards. Evaluate SOC 2 Type II, GDPR, HIPAA BAA, ISO 27001, and CCPA compliance. ETL platform security compliance certifications comparison should also cover encryption at rest and in transit, SSO/MFA support, VPC peering, and role-based access controls.

This chart provides a high-level overview of leading enterprise data integration platforms, highlighting their architecture, processing model, and primary use case.

| Platform | Architecture and Best For | Key Differentiators |

|---|---|---|

| Informatica IDMC | Batch ETL with governance. Large enterprises needing multi-cloud data management and quality. | End-to-end data quality, lineage, cataloging. Custom enterprise licensing. G2: 4.2/5. |

| Talend (Qlik) | Batch and near-real-time ETL. Full-stack data platform with open-source option. | 1,000+ connectors, big data support (Hadoop, Spark), data quality suite. G2: 4.3/5. |

| Jitterbit Harmony | Low-code iPaaS. Hybrid cloud and on-premise workflow automation and API management. | Pre-built templates, graphical design studio, centralized monitoring. G2: 4.6/5. |

| Fivetran | Automated ELT. Data teams loading SaaS sources into cloud warehouses with minimal config. | 300+ connectors, automated schema migration, warehouse-native transforms. MAR pricing. |

| Estuary Flow | Real-time streaming. CDC-native pipelines for warehouses, lakes, and operational systems. | True streaming architecture, combined batch and real-time in one pipeline framework. |

| Stacksync | Real-time bi-directional sync. Operational consistency across CRM, ERP, and databases. | Sub-second CDC sync, conflict resolution, 200+ connectors, flat-rate pricing, no-code setup. |

ETL and ELT platforms move data one way into warehouses for analytics. They do not maintain consistency across live operational systems.

iPaaS tools automate workflows between apps but require custom configuration to approximate bi-directional sync with no native conflict resolution.

Bi-directional sync platforms like Stacksync keep CRM, ERP, and database records identical in real time with built-in conflict handling.

The comparison chart provides a high-level view. The profiles below examine each platform's architecture, strengths, and limitations to support a more informed Informatica enterprise ETL data integration platform evaluation, Qlik enterprise ETL data integration platform evaluation, and operational sync platform assessment.

Informatica is a long-standing leader in the enterprise ETL data integration space. IDMC provides end-to-end data management including integration, quality, governance, cataloging, and master data management. It is built for large enterprises running complex hybrid and multi-cloud environments where data governance and lineage tracking are non-negotiable requirements.

Strengths: comprehensive data quality and governance suite, strong metadata management, broad connector library, and proven scalability for batch ETL workloads across petabyte-scale datasets.

Limitations: the platform requires specialized teams and significant implementation investment. Licensing is enterprise-contract based with custom pricing, which can make cost forecasting difficult for mid-market teams. Real-time capabilities exist but are secondary to its batch-oriented architecture.

Best fit: large enterprises with dedicated data engineering teams that need robust ETL, governance, and data quality across multi-cloud environments.

Talend, now part of Qlik, offers a full-stack data platform with over 1,000 pre-built connectors. It supports both batch and near-real-time processing, data quality, and governance capabilities. Talend Cloud provides a managed SaaS experience, while Talend Open Studio remains available as an open-source option for teams that want more control.

Strengths: massive connector ecosystem, open-source flexibility, strong data quality features, and native big data support (Hadoop, Spark).

Limitations: the full platform has a steep learning curve. Enterprise licensing is custom-priced and can escalate with scale. Real-time processing is available but not the primary architectural strength.

Best fit: enterprises with data engineering teams needing a flexible, full-stack platform that handles both analytics and data quality across large connector footprints.

Jitterbit's Harmony platform is a low-code iPaaS that combines integration, API management, and workflow automation. It connects SaaS, on-premise, and cloud applications using pre-built templates and a graphical design studio. The platform supports both batch and real-time processes with a centralized management console for monitoring.

Strengths: fast time-to-value with pre-built templates, strong API management capabilities, hybrid cloud and on-premise support, and a high G2 score (4.6/5) reflecting user satisfaction.

Limitations: bi-directional sync requires custom workflow configuration rather than native support. Scalability for high-volume, low-latency operational sync is not its primary design target. Pricing is tiered and can increase with connector count and transaction volume.

Best fit: businesses seeking a low-code iPaaS for hybrid integration and API management across SaaS applications and on-premise systems.

Fivetran is an automated ELT platform focused on data movement into cloud warehouses. It offers 300+ pre-built connectors with automated schema migration and incremental loading. The platform handles extraction and loading automatically, leaving transformations to the target warehouse using dbt or SQL.

Strengths: minimal configuration, automated schema handling, strong warehouse support (Snowflake, BigQuery, Redshift, Databricks), and a large connector library for SaaS data sources.

Limitations: unidirectional only (source to warehouse). No operational sync or bi-directional capabilities. Monthly Active Row (MAR) pricing can spike unpredictably during high-volume periods, making cost control a challenge for enterprise data consolidation platforms evaluation.

Best fit: data teams that need automated, reliable cloud ETL into analytics warehouses without managing infrastructure.

Estuary is a data integration platform built on real-time streaming architecture. It uses CDC to capture changes and stream them into warehouses, lakes, or operational systems. The platform combines real-time and batch capabilities in a single pipeline framework.

Strengths: true real-time streaming architecture, CDC-native, and the ability to handle both analytics and operational data movement patterns.

Limitations: smaller connector ecosystem compared to established players. Enterprise adoption is still growing, and community resources are more limited.

Best fit: data engineering teams building real-time streaming pipelines who need CDC-native architecture with warehouse and operational system support.

Understanding the architectural differences between enterprise ETL and ELT platforms is fundamental to selecting the right tool. This distinction drives every downstream decision about latency, scalability, and operational fit.

Traditional ETL platforms extract data from source systems on a schedule, transform it in a staging layer, and load the results into a destination (typically a data warehouse). The batch interval, whether hourly, daily, or weekly, determines data freshness. This model works well for analytics and reporting workloads where teams need aggregated data but can tolerate latency.

The trade-off is that batch ETL introduces an inherent delay between when data changes in the source system and when it becomes available downstream. For analytics, this is acceptable. For operational systems where stale data causes order errors or billing discrepancies, batch latency creates direct business risk.

Real-time enterprise data integration tools use event-driven architectures and CDC to detect changes the moment they occur. Instead of polling on a schedule, these platforms capture field-level updates and propagate them to downstream systems within milliseconds or seconds.

Top cloud data integration platforms for enterprise analytics increasingly support micro-batch or streaming modes alongside traditional batch. Fivetran, for example, offers 5-minute sync intervals. Estuary provides true streaming. The distinction matters when evaluating data integration tools comparison for pricing, features, and scalability, because real-time architectures require different infrastructure than batch schedulers.

Both ETL and ELT are fundamentally one-way: data moves from sources to a destination. Bi-directional sync maintains consistency across two or more live systems simultaneously. When a record updates in Salesforce, the change propagates to NetSuite and PostgreSQL. When the same record updates in NetSuite, those changes flow back.

This requires conflict resolution, field-level change tracking, and guaranteed delivery. It is not a workflow automation problem (iPaaS) or an analytics loading problem (ELT). It is an operational consistency problem that requires purpose-built architecture.

For teams evaluating reliable cloud integration data syncing solutions, the most critical question is not which platform has the most connectors, but which one guarantees data consistency when things go wrong.

The most reliable database integration solutions share four characteristics:

A critical gap exists between analytics-focused ETL and ELT tools and generic iPaaS platforms. The core operational challenge for most businesses is not moving data into a warehouse for analysis. It is ensuring data stays consistent and current across the live systems that run the business: CRM, ERP, databases, and ecommerce platforms.

Stacksync is built to solve this specific problem. It is a real-time, bi-directional data synchronization platform with 200+ connectors designed for operational consistency rather than analytics loading.

Unlike platforms that offer two separate one-way syncs configured as workflows, Stacksync provides native bi-directional synchronization with built-in conflict resolution. When data updates in Salesforce, the change propagates to NetSuite and your production PostgreSQL database in sub-second time, and vice versa.

Core technical capabilities:

Pricing is a persistent pain point in the enterprise data integration tools market. Consumption-based models (per-row, per-MAR, per-API-call) create unpredictable costs that escalate as data volumes grow, a dynamic known as the "success tax."

Stacksync uses flat-rate pricing: Starter at $1,000/month, Pro at $3,000/month, and custom Enterprise plans. This model gives teams cost predictability regardless of data volume spikes during month-end closes, seasonal peaks, or bulk migrations.

Selecting the best enterprise data integration platform requires mapping your primary technical objective to the right architectural category.